ITAD Blog

Analyzing Hardware Refresh Cycles In The Data Center 2020

ITAD Blog

ANALYZING HARDWARE REFRESH CYCLES IN THE DATA CENTER 2020

From advice on decommissioning to insights around timing, get the latest research and analysis on hardware refresh cycles in the data center.

Getting the timing right on hardware refresh cycles in the data center is both a science and an art. With so many considerations to weigh, the decision on when to pull the trigger (and how large to go) is always a tricky call for companies and their CIOs.

Here’s our analysis of current approaches and trends around hardware refreshes in the modern-day data center.

Major Trendlines In Hardware Refresh Cycles

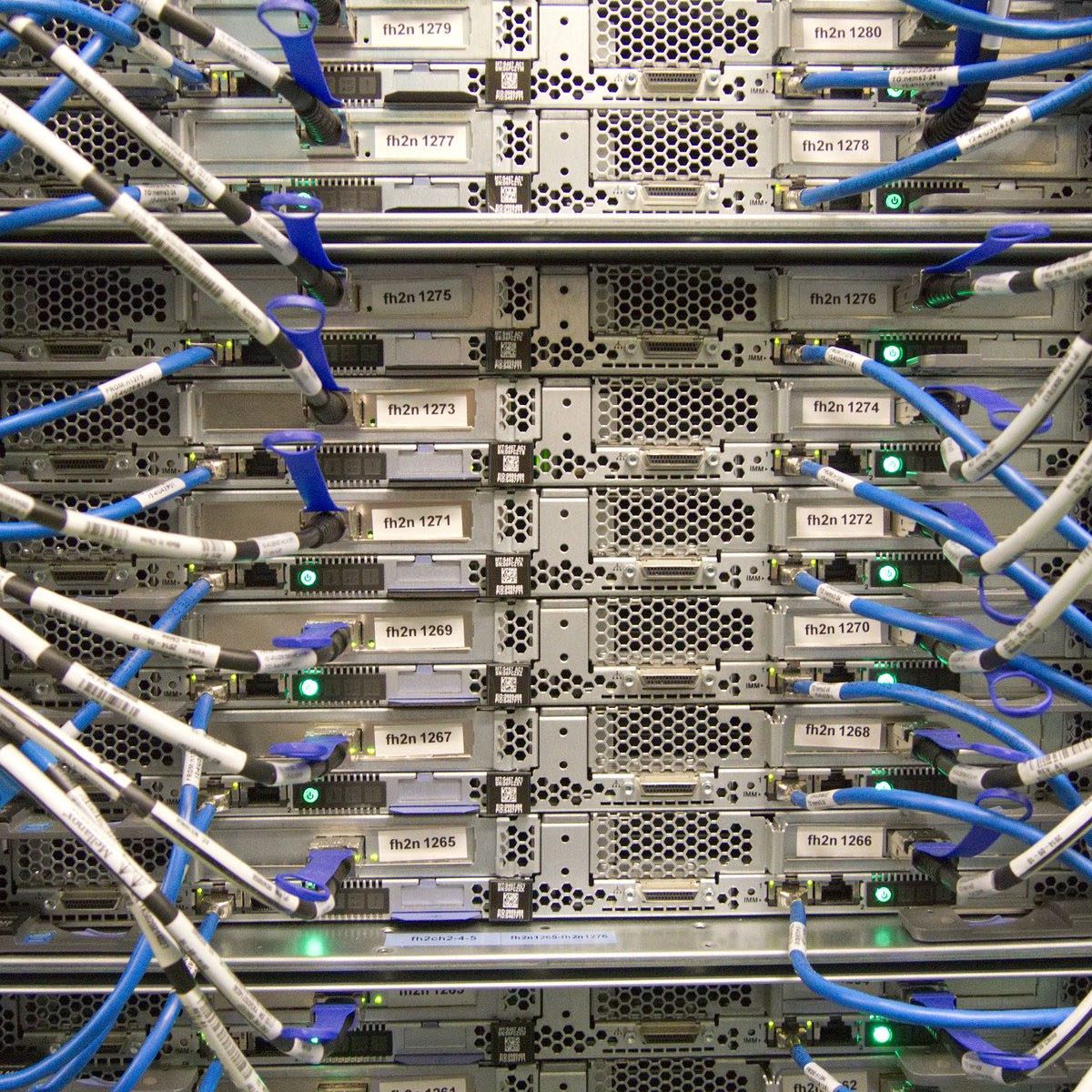

Despite the accelerated growth of cloud computing (as widely documented by the likes of Synergy Research Group and Canalys), the on-premises data center is still very much alive and kicking.

At least, that’s according to the 2020 Uptime Institute data center survey, which found that in 2022 a majority (54%) of IT infrastructure will continue to run in enterprise data centers, down slightly from 58% today.

Despite sharp cloud growth, Uptime’s research reveals the scale of on-premises infrastructure.

It might be natural to think that the continued ubiquity of on-premises computing will drive more frequent hardware refreshes as companies seek to avail themselves of the latest and greatest technology to power their data centers.

However, the opposite is true—refresh cycles, according to the Uptime Institute’s survey, are lengthening rather than shortening. The most common time between refreshes is now five years, compared with three years in 2015. That is a significant shift in a relatively short space of time.

Closer examination of Uptime’s survey results reveals a complex and shifting picture but with a clear underlying direction of travel:

- In 2015, the majority (37%) of respondents listed three years as the most common time between server refreshes.

- In 2020, this figure drops to 26%, while the percentage reporting five years as the average length between refreshes rose from 20% to 31%.

So, what’s driving this see-saw effect? Uptime Institute suggests that a slowing in power usage efficiency (PUE) for data center hardware has blunted a major incentive for more frequent server refreshes: the performance advantages typically secured through a refresh are increasingly incremental. Moore’s law is delivering diminishing returns.

Uptime Institute 2020 data center report

PUE is certainly one thing. Other factors we suspect at play are:

- Hardware-as-a-service: The adoption of hardware leasing models. These days enterprise vendors love to bundle their hardware products in subscription models. Cisco is the latest big player to consider jumping into the fray with hardware (it already sells much of its software as a subscription), while Dell and HPE have been plowing this furrow for some time. In part they’re responding to customer demand—who wants to incur all that CapEx when the technology landscape is changing so rapidly? At the same time, the enterprise OEMs are rushing to shore up on-premises market share before the hyperscale’s (AWS, Google Cloud, Microsoft Azure, et al) take it for themselves through their maturing private and hybrid cloud offers.

- Software vs hardware: The hyperscale’s have long proven that commodity hardware is good enough to run industrial-grade workloads. They have led the field in developing high-performance equipment for the most demanding applications, leveraging software to get the most out of the machines. The enterprise typically follows the lead of the hyperscale’s when it comes to infrastructure management. New hardware is expensive and mid-size and enterprise CIOs are very aware that software upgrades or applying a different systems approach can breathe a new lease of life into previously flagging infrastructure.

Why buy a new car if, with a little bit of TLC and some whizzy re-engineering, your old one continues to run just fine?

- Budgetary limitations: The commercial case for new IT equipment is always subject to scrutiny, and there’s little doubt that the coronavirus pandemic has further tightened the screws. Indeed, recent IDC research shows 2020 hardware spending taking a disproportionate hit as companies downgrade equipment investments in response to the disruption. Either way, there remains significant appeal for enterprises in refreshing their hardware, particularly where budget is less of an issue. Investing in new technology will almost always bring some level of performance upside, and older equipment will need replacing at some point anyway.

Industry Arguments For Hardware Refresh

Unsurprisingly, enterprise vendors remain eager to push hardware upgrade cycles. Take Dell, which—is a 2019 Forrester report it commissioned—warns what it calls “aging firms” of the opportunity costs of not upgrading equipment in a timely fashion.

“The consequence is a lack of agility and unmet business needs,” the report authors stated. “Aging infrastructure and limited progress on SDDC (software-defined data center) technology adoption are hindering IT organizations from meeting business needs.”

“The result? Time-consuming application updates, application performance that does not meet end-user needs, and infrastructure that cannot effectively support emerging workloads like artificial intelligence and machine learning.”

The report found that 40% of server hardware deployed at company data centers was, on average, more than three years old—which, the report authors argue, is far from optimal for performance.

“Refresh your servers more frequently—ideally less than every three years,” they advise. In order to demonstrate ROI, they recommend using (KPIs) key performance indicators such as

- time-to-deploy infrastructure

- server-to-admin ratios

- mean-time-to-approve changes

- team attrition

Forrester/Dell 2019 report on data center hardware refreshes

Interestingly, the Forrester research, based on surveys conducted in December 2018, placed the average time between refresh cycles at 3.98 years. This is broadly consistent with the lengthening in the cycle from three to five years identified by Uptime Institute in its 2020 survey.

From most perspectives, refresh cycles are indeed getting longer. And perhaps most importantly, the hyperscale’s—always at the vanguard of industry change—are bearing this out.

Refresh Cycles And The Circular Economy

While enterprise vendors like Dell advocate for more frequent refresh cycles, it’s important to watch what the hyperscale’s are doing to properly gauge which way the wind is blowing.

Consider Microsoft. It currently operates 3 million servers (and counting) across its massive data center portfolio—a huge amount of data center equipment by any measure. Notably, each server has an average lifespan of five years, aligning with the trends highlighted in Uptime Institute’s research.

Also indicative is what Microsoft plans to do with its retiring server hardware when it comes to the end of its useful life. Similar to Google, Microsoft is deepening its commitment to circular economy practices, which emphasize repair and reuse over disposal. Indeed, between now and 2030, Microsoft aims to reduce the level of e-waste from its data centers by 90% through a combination of repair management, reuse, and recycling, largely orchestrated by intelligent software.

“Using machine learning, we will process servers and hardware that are being decommissioned onsite,” Microsoft president Brad Smith remarked in a statement from August 2020. “We’ll sort the pieces that can be reused and repurposed by us, our customers, or sold. We will use our learnings about reuse, disassembly, reassembly, and recycling with design and supply chain teams to help improve the sustainability of future generations of equipment.”

This trend toward repair and reuse will not only further lengthen the time horizon around hardware refreshes but support the concrete expansion of a secondary market for used data center equipment—certainly in the enterprise and mid-size data center—that has been largely peripheral to this point. Think of it as circular hardware.

“In Amsterdam, our Microsoft Circular Center pilot reduced downtime at the data center and increased the availability of the server and network parts for our own reuse and buy-back by our suppliers. It also reduced the cost of transporting and shipping servers and hardware to processing facilities, which lowered carbon emissions.”

Microsoft president Brad Smith

Planning Your Hardware Refresh

Let us be clear: the upsides of well-planned technology refreshes are real. “Optimized hardware refresh cycles reduce e-waste by over 80% and achieve 15% better performance while lowering acquisition costs by 44%,” according to a Super Micro report published at the end of 2018.

Even within the context of lengthening refresh cycles, hardware needs to be replaced at some point. While this is certainly true of compute capacity, the case is even more clear around storage. Businesses cannot ignore the relentless growth in data generation: unless you’re migrating data to the cloud, you need additional capacity on-premises to store it.

Of course, first you will want to run a cost-benefit analysis against any proposed refresh, asking such questions as:

- What are the performance and energy savings you expect to derive from the upgrade?

- What are the labor and capital costs associated with the work?

- How will you stage the work to minimize disruption to the operation?

- Does your refresh plan sufficiently prepare your organization for future workload demands?

- What are your storage needs compared with computing?

These are important questions. But what’s equally clear is that data center operators remain highly unsure about how to get the most out of the hardware they eventually earmark to retire. Are you asking yourself:

- What is the value residing in the IT equipment you’re replacing?

- How can you claw back some of the investment in order to offset the upfront costs of the upgrade?

- What equipment can you redeploy internally, and what can you decommission and earmark for secure remarketing?

Author: Horizons Technology